Every PyRun workspace launches with GitHub Copilot and OpenCode pre-configured via MCP servers — already connected to your AWS account. Ask your AI to deploy, monitor, query, and scale your cloud workloads in natural language. Plus full ML framework support for training and inference.

Model Context Protocol servers auto-connect your AI agents to your AWS account on every workspace launch. Zero manual configuration needed.

Pre-installed and cloud-aware. Copilot can see your AWS resources, suggest deployment code, and help debug distributed Python workloads.

An open-source AI coding agent also pre-configured in your PyRun workspace, connected to your AWS environment via MCP from the start.

Ask your AI to list S3 buckets, check Lambda logs, trigger a job, or monitor usage. Cloud operations through conversation.

Simplify complex AI pipelines. PyRun's integrated environment and automated management let you focus on model development and experimentation.

Easily configure runtimes for TensorFlow, PyTorch, Scikit-learn, Dask-ML, and more using PyRun's Runtime Management.

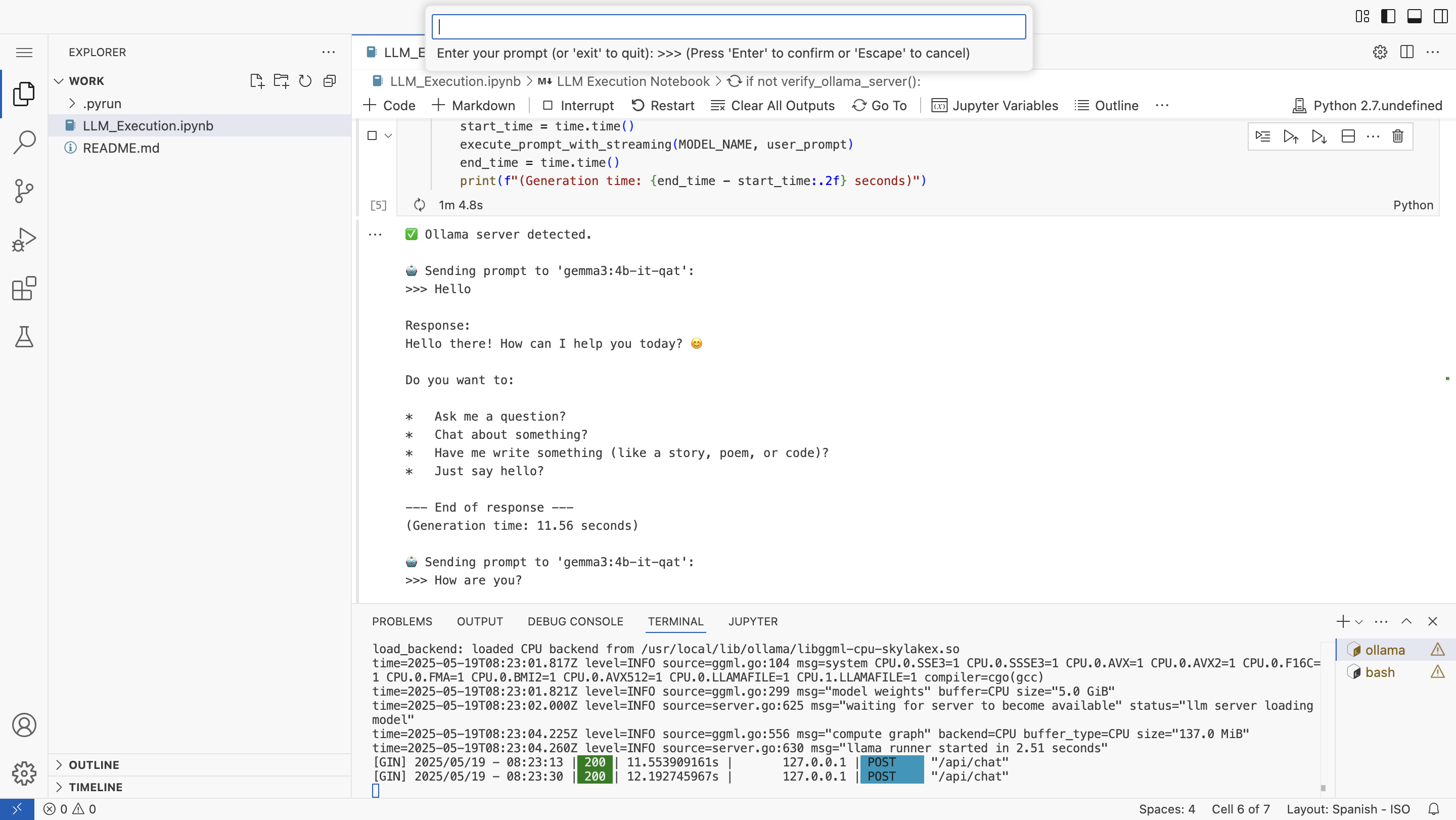

Run and experiment with Large Language Models (LLMs) like Llama 3 locally within your PyRun workspace using Ollama, directly via notebooks.

PyRun handles all the wiring so your AI agent is cloud-ready the moment your workspace opens.

Create a PyRun workspace with a single click. Your VS Code-like IDE starts immediately.

MCP servers automatically discover and connect to your AWS account. No manual config, no credentials to paste.

GitHub Copilot and OpenCode are pre-installed and fully aware of your cloud environment, S3 data, and available services.

Ask your agent to write a Lithops job, trigger a Lambda, analyze S3 data, or debug a pipeline. Then click Run.

Get started quickly with pre-built AI pipelines showcasing PyRun's capabilities for various ML tasks.

An AI pipeline for audio keyword recognition using TensorFlow. Demonstrates audio data preprocessing, model training, and inference.

Shows how to use Dask-ML for distributed machine learning tasks, scaling your model training across multiple nodes for efficiency.

An AI pipeline for image classification tasks using TensorFlow. Covers data loading, model building, data augmentation, and evaluation.

An interactive notebook environment to run and experiment with Large Language Models (LLMs) like Llama 3 locally within your PyRun workspace using Ollama.

We're continuously expanding PyRun's AI capabilities. Upcoming enhancements will make the agentic development experience even more powerful:

Launch a workspace with GitHub Copilot and OpenCode pre-connected to your AWS account. Train ML models, deploy serverless jobs, and chat with your cloud — all for free during beta.

Get Started for Free